Design a Search Autocomplete System

Simple to type, hard to scale. The typeahead box you've used a thousand times requires a surprisingly deep set of distributed systems decisions.

What the interviewer is testing

Autocomplete looks trivial from the outside, you type a letter, a dropdown appears. But underneath it requires a data structure that answers prefix queries in microseconds, a ranking model that surfaces the most relevant suggestions, a caching hierarchy that absorbs a 10× read spike, and a pipeline that keeps scores fresh without rebuilding from scratch every hour.

The interviewer is probing whether you can reason about the tension between query-time latency (the user notices anything over 100ms) and data freshness (yesterday's trending topic should appear today). These two goals pull in opposite directions: freshness pushes toward more frequent updates, which strain a live trie; latency pushes toward aggressive caching, which delays propagation of new trends.

| Level | What good looks like |

|---|---|

| L3/L4 | Trie for prefix matching, top-k pre-computed per node, basic LRU cache in front |

| L5 | Offline score aggregation pipeline, trie sharding strategy, CDN edge caching for common prefixes, debounce on client |

| L6 | Separate trending trie refreshed every few minutes, personalization re-ranking layer, graceful degradation under trie rebuild |

| L7/L8 | Multi-region active-active deployment, global vs locale-specific tries, cost model for trie memory vs read latency, experimentation on ranking weights |

Requirements clarification

Before any architecture discussion, establish what the system actually needs to do. These answers determine almost every architectural decision that follows.

Functional requirements

| Requirement | Details |

|---|---|

| Prefix-based suggestions | Return top-k completions for the current input prefix |

| Ranked output | Results ordered by global popularity (and optionally personalised) |

| Freshness | New trending queries appear in suggestions within minutes, not days |

| Multiple languages / locales | Suggestions are locale-aware (different tries or filtered views) |

| Safe content | Filter offensive or sensitive prefixes before serving |

Non-functional requirements

| NFR | Target | Why this number |

|---|---|---|

| p99 latency | < 100ms end-to-end | Studies show users notice dropdown lag above ~150ms; 100ms leaves margin for network |

| Availability | 99.99% (≈52 min/year) | Autocomplete failure degrades search UX globally; stale fallback acceptable |

| Suggestion freshness | < 15 minutes for trending | Viral events move fast; hourly lag loses trust |

| Scale | 100M DAU, 3 searches/user/day | Baseline scenario; peak 3–5× burst at news events |

| Top-k results | k = 5 to 10 | UI shows 5–10 rows; more is noise |

p99 < 100ms, what it drives ›

100ms end-to-end means the backend must respond in <30ms after subtracting network RTT and client rendering. This forces in-process or Redis-resident trie lookups, a disk-backed store simply cannot deliver this. It also mandates CDN edge caching for the most frequent prefixes, where the client receives results from a POP <10ms away.

Freshness < 15 min, what it drives ›

A 15-minute SLA rules out nightly batch rebuilds of the full trie. It requires a streaming aggregation pipeline (Kafka + Flink) feeding score deltas to a "hot trie" or a separate trending index. The main trie can still be rebuilt hourly or daily; the trending overlay carries the recency signal.

99.99% availability, what it drives ›

99.99% = ~52 minutes of downtime per year. During a trie rebuild (which can take minutes for a large corpus), the system must serve stale results from the previous snapshot rather than return errors. Read replicas of each trie shard and a fallback static prefix list enable graceful degradation.

Capacity estimation

With 100M DAU and 3 searches per user per day, each search session generates roughly 3 autocomplete API calls (after client debouncing removes redundant mid-word keystrokes). The write side, ingesting query events to update scores, is a fraction of the read side.

Interactive estimator

⚠ Node count ≠ term count. A 100M-term corpus shares common prefixes, actual node count is lower. Node size of 150B includes the top-k list overhead (k=10 entries × ~12B each ≈ 120B) plus the children pointer map.

Key architectural implication: At 100M DAU with 3 searches × 3 AC calls, average read QPS is ~10.4K and peak (5×) is ~52K. The trie itself fits comfortably in RAM on a single large host (~15GB for 100M terms at 150B/node). The bottleneck is not memory, it's CPU contention on concurrent prefix traversals at peak QPS. In steady state, horizontal read replicas of each trie shard are needed even before any geographic distribution. Shard by prefix character range (A–G, H–N, O–Z) so each replica serves a focused key space.

High-level architecture

High-level architecture, solid blue = synchronous read path; dashed amber = asynchronous write / build pipeline

Client applies a 150–300ms debounce before sending each request, preventing a separate API call for every keystroke and cutting effective QPS by 3–5×.

CDN Edge caches the top-k suggestions for the most common prefixes (typically 1% of all prefixes account for 80%+ of traffic). A TTL of 60 seconds balances freshness against hit rate; trending prefixes get a shorter TTL or explicit invalidation.

API Gateway handles TLS termination, rate limiting per user/IP, and load balancing across Query Service instances.

Query Service performs an in-process or Redis-backed trie lookup, merges results from the trending overlay, applies the personalization layer, and returns the final top-k list.

Trie Shards are in-memory data stores holding pre-ranked top-k lists at every trie node. Sharding is by first-character range to distribute load.

Trending Service maintains a "hot prefix" overlay rebuilt every few minutes from the streaming pipeline output. It stores only the delta, prefixes whose scores have changed significantly - to enable fast merges with the base trie.

Stream Pipeline (a durable message queue such as Kafka feeding a stream processor such as Flink) ingests all query events, windowed-aggregates counts per prefix, and emits score updates to the Trending Service.

Query Log DB (an object store such as S3 feeding a columnar analytics warehouse such as BigQuery) retains the full query log for the hourly/daily Trie Builder batch job.

Trie Builder runs as a batch job (a distributed compute engine such as Spark) that recomputes global popularity scores across the full retention window and produces a new serialized trie snapshot that is atomically swapped in.

Architectural rationale

Why separate the Trending Service from the base trie? ›

The base trie is large (~15GB) and rebuilding it takes tens of minutes. An in-place update of a node mid-traversal requires locking that increases read latency. The Trending Service holds only the small set of prefixes that have experienced recent score changes, so it can be rebuilt in seconds. The Query Service merges both views at query time, a union of the stable base trie and the hot overlay, without ever touching the base trie's write path.

Why CDN edge cache, not just API server cache? ›

A server-side cache still requires a round-trip to the nearest data centre. For users on the other side of the world, that can be 80–150ms alone, which already blows the 100ms budget. CDN POPs (Points of Presence) sit within 10–20ms of most users and can serve the cached top-k for common prefixes with negligible latency. For a system where 1% of prefixes drive 80% of traffic, the CDN hit rate is very high and the cache payload per prefix is tiny (5–10 strings).

Real-world comparison

| Decision | This design | Google Search | Twitter/X |

|---|---|---|---|

| Core data structure | In-memory trie + hot overlay | Distributed prefix index (proprietary) | Inverted index (Earlybird) |

| Score freshness | Streaming + batch hybrid | Sub-minute streaming | Sub-minute streaming (trending focus) |

| Personalisation | Re-ranking layer (optional) | Deep personalisation (query history, location) | Follow graph + recency boosting |

| Edge caching | CDN TTL 60s + explicit invalidation | Global CDN; per-locale caches | CDN; trending invalidated immediately |

There is no single right data structure. Google and Twitter reach different conclusions because their access patterns differ, Google needs global prefix coverage across hundreds of languages; Twitter prioritises recency over breadth. Requirements determine the answer.

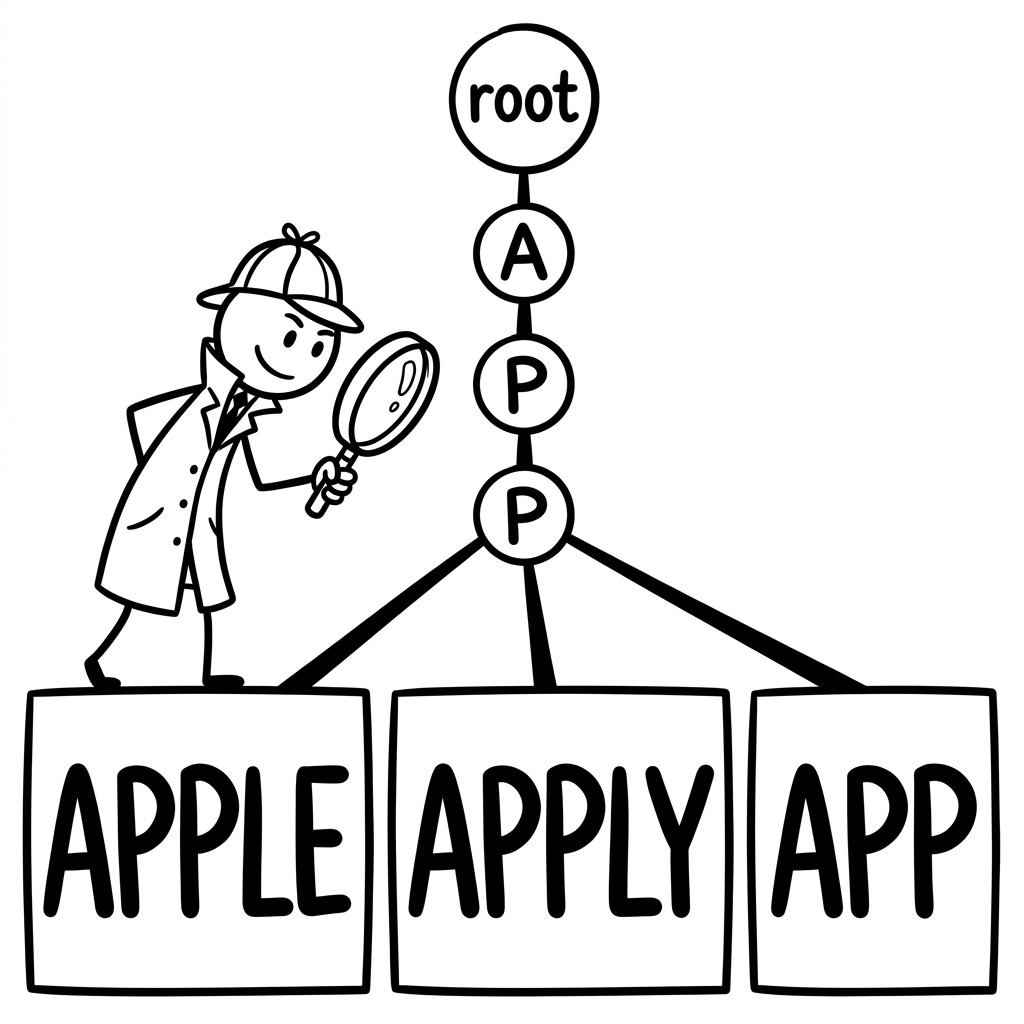

Core algorithm: Trie vs Inverted Index

Every autocomplete system has one central algorithmic question: how do you find all strings that share a given prefix, quickly? Two primary approaches dominate production systems, and they involve fundamentally different tradeoffs around memory, flexibility, and operational complexity.

The classic trie example (to, tea, ted, ten, in, inn). Left: each shared prefix is a single node, "t" is traversed once regardless of how many words start with it. Right: the inverted index stores a separate row per prefix; interior rows ("t", "te", "i") duplicate completions that the trie represents with shared pointer hops. Highlighted green = word terminals ($).

Our choice for this system

A trie is the right structure for a general-purpose prefix autocomplete. It maps naturally to prefix traversal, stores exactly one top-k list per node (so no merge is needed at query time for the base case), and fits entirely in memory for a 100M-term corpus. The inverted index approach is preferable when the system also needs fuzzy matching (correcting typos), multi-word completions, or language-specific stemming, capabilities an enterprise search platform like Elasticsearch provides out of the box.

One open question: How do you update a trie node's top-k list when a query's score changes? A naive approach recomputes top-k lists at all affected ancestor nodes on every score change, expensive for a large corpus. Production systems often decouple the score store from the trie structure: nodes store references to a score lookup table rather than inline scores, and the table is updated independently. The top-k list at each node is then regenerated lazily or on a schedule, not on every score change.

Code: trie lookup with top-k at each node Expand for implementation ›

// Each node stores: children map + pre-ranked top-k list

class TrieNode {

constructor() {

this.children = new Map(); // char → TrieNode

this.topK = []; // [{term, score}] pre-sorted, length ≤ k

}

}

function query(root, prefix, k = 5) {

let node = root;

for (const ch of prefix) {

if (!node.children.has(ch)) return []; // no completions

node = node.children.get(ch);

}

// O(L) traversal, then O(1) read of the pre-ranked list at the terminal node

return node.topK.slice(0, k);

}

function insertWithScore(root, term, score, k = 10) {

// O(L × k log k) per term, build top-k in batch (Trie Builder), not on each live insert

// Start by updating root (handles empty-prefix queries, e.g. showing global top-k on focus)

const _upsert = (node) => {

const existing = node.topK.findIndex(e => e.term === term);

if (existing >= 0) node.topK.splice(existing, 1);

node.topK.push({ term, score });

node.topK.sort((a, b) => b.score - a.score);

if (node.topK.length > k) node.topK.pop();

};

_upsert(root); // root top-k = global top-k for empty prefix ""

let node = root;

for (const ch of term) {

if (!node.children.has(ch))

node.children.set(ch, new TrieNode());

node = node.children.get(ch);

_upsert(node); // update top-k at every prefix node along the path

}

}API design

The read side needs one endpoint; the write side (query logging) is fire-and-forget. Both are simple by design, autocomplete latency budgets leave little room for complex server-side logic at the API layer.

GET /v1/autocomplete

Returns top-k suggestions for a given prefix. Uses GET because the operation is idempotent and cacheable , the CDN caches this endpoint by query string.

GET /v1/autocomplete?q=appl&k=5&locale=en-US&session_id=abc123

{

"prefix": "appl",

"suggestions": [

{ "term": "apple", "score": 9.2, "source": "global" },

{ "term": "apple store", "score": 8.1, "source": "global" },

{ "term": "apple watch", "score": 7.4, "source": "trending" },

{ "term": "apple iphone", "score": 6.9, "source": "global" },

{ "term": "apple pie", "score": 5.3, "source": "personalized" }

],

"served_by": "edge", // or "origin", for observability

"latency_ms": 8

}CDN cache key, session_id must be excluded. If the CDN caches by full query string,

every unique session_id produces a separate cache entry, completely defeating the CDN

layer. Strip it from the cache key via a CDN normalisation rule: Cloudflare Cache Rules → exclude

query param session_id; Fastly VCL → unset req.http.session_id before

vcl_hash. The stripped value is forwarded to origin as a request header for logging and

personalisation.

POST /v1/query-log (async, fire-and-forget)

Clients log completed searches (not every prefix keystroke) to feed the scoring pipeline. This is sent asynchronously after the user submits, it must not block the UI.

// Request

POST /v1/query-log

{

"query": "apple watch",

"session_id": "abc123",

"locale": "en-US",

"timestamp": "2026-04-01T12:34:56Z",

"selected_suggestion": true // did user pick from dropdown?

}

// Response 202 Accepted, do not wait on thisSecurity & PII L6+, Before publishing to Kafka, the ingest layer must strip or hash any PII: IP addresses, authenticated user IDs, and any query text that matches a PII pattern (emails, phone numbers). Query text itself may be sensitive - apply a content filter before ingestion. For GDPR compliance, set a data retention window on the query event store and implement right-to-erasure by hashing the user identifier rather than storing it raw. Signals that a candidate has thought about this at L6: knowing where in the pipeline PII is removed (at ingest, before Kafka) rather than downstream.

| Endpoint | Level | Notes |

|---|---|---|

| GET /v1/autocomplete | L3/L4 | Core read path, required |

| POST /v1/query-log | L3/L4 | Feeds the scoring pipeline, required |

| GET /v1/autocomplete/trending | L5 | Returns trending prefixes for UI surfacing |

| DELETE /v1/autocomplete/term | L6 | Admin: remove offensive or incorrect suggestions |

| POST /v1/autocomplete/boost | L7/L8 | Ops: manually boost a prefix for campaigns/events |

Core flow

The complexity lies in the caching hierarchy and the fallback behaviour. The freshness NFR from §2 drives the key decision: whether to serve from edge cache or fall through to origin.

The CDN HIT path resolves in <10ms. The MISS path, including trie lookup and trending merge, resolves in <30ms, leaving headroom within the 100ms p99 budget.

Key tradeoff, CDN TTL: A 60-second TTL means stale results for up to 60 seconds after a score update. For evergreen prefixes (like "weather" or "news") this is fine. For a breaking news event, stale results actively mislead users. The freshness NFR from §2 justifies selective TTL overrides: the Trending Service pushes CDN invalidation events for any prefix whose score delta exceeds a threshold, effectively reducing TTL to near-zero for hot trending terms.

Data model

Four entities shape the data model, and they are used very differently. Understanding their access patterns first prevents choosing the wrong storage engine or schema.

Entities and access patterns

| Entity | Operation | Frequency | Query shape |

|---|---|---|---|

| Trie node | Prefix lookup | Very high (every autocomplete request) | Key = prefix string → top-k list |

| Query score | Score read (batch) | Medium (hourly Trie Builder job) | Scan all terms, compute aggregate score |

| Query event log | Append (write) | High (every completed search) | Time-range scan per pipeline window |

| Trending scores | Read + write | Medium (every Flink output, every AC request) | Key = prefix string → trending score delta |

Two things jump out. First, the trie lookup is by far the hottest read, it must be in memory. Second, the query event log is write-heavy and append-only, which means it belongs in a durable append log (Kafka) feeding an object store, not a transactional database.

trie_node lives in Redis (in-memory key-value store) keyed by prefix string. query_score lives in a relational store (PostgreSQL). trending_score lives in Redis with short TTL. query_event_log flows through Kafka to cold storage.

Why store top_k as JSON inside the trie node? ›

Storing the entire top-k list inline at each node avoids any traversal to gather child results at query time. It trades storage space (each node duplicates data already present at ancestor nodes) for read speed. For a 100M-term trie at k=10, the storage overhead is approximately 100M × 10 suggestions × 30 bytes ≈ 30GB, feasible on a few large RAM hosts, unmanageable for k=100. This is why k is kept small (5–10).

Caching strategy

Autocomplete is an unusually cache-friendly workload: the same popular prefixes are queried millions of times per hour, results are small (5–10 strings), and the cache key is simply the prefix string plus locale, making cache hits trivially deterministic. The latency NFR from §2 makes caching mandatory - not optional.

Four-layer caching hierarchy. The top 1% of prefixes (about 1M entries) drive over 80% of all requests, CDN hit rate for these is >95% after warm-up.

| Layer | Tech | TTL | Invalidation | Why it exists |

|---|---|---|---|---|

| L1 Client | Browser JS Map | 30s (session) | Tab close / session end | Eliminates redundant network calls for the same prefix within one session |

| L2 CDN Edge | Cloudflare / Fastly | 60s | Purge API on trending update | Sub-20ms responses for the vast majority of requests; needed for the 100ms NFR (§2) |

| L3 Redis Trie | Redis cluster (in-memory) | None (persistent) | Atomic key rename on trie swap | Single source of truth for prefix → top-k; must be in memory for <5ms reads |

| L4 Fallback | Static JSON at CDN | 24h | Deploy on trie rebuild | Availability NFR (§2), serves stale but correct results during trie rebuild or Redis failure |

Cache invalidation

The hardest part of this cache hierarchy is the CDN layer. Since autocomplete results are cacheable by prefix key (an exact-match query), invalidation is precise, only the affected prefix needs to be purged, not the entire cache. The Trending Service publishes invalidation events to a CDN Purge API whenever a prefix's trending score crosses a threshold. This means frequently-changing hot prefixes get near-real-time updates, while stable prefixes (like "weather") coast on the 60-second TTL.

Deep-dive: scalability

At L5 and above, the interviewer wants to see how the system holds together under load, during failures, and across regions. The capacity numbers from §3 set the context: ~3K RPS average, ~17K RPS at peak, ~15GB trie in memory.

Production: multi-region active-active. Each region has its own Redis trie cluster and Query Service fleet. Trie snapshots are distributed from the Trie Builder to all regions. Kafka is global; Flink outputs are per-region.

Trie sharding strategy L5 ›

Shard the trie by first character (or first two characters for high-traffic letters). Each shard is served by a dedicated Redis node (an in-memory key-value store) with read replicas. A lookup for prefix "apple" routes to the "a" shard. This avoids cross-shard fan-out for any single query, the entire prefix lives within one shard.

Zero-downtime trie swap L5/L6 ›

The Trie Builder writes the new trie snapshot under a versioned key namespace (e.g.

trie:v2:{prefix}). Once all keys are written, it atomically updates a single

pointer key: SET trie:current_version "v2". Query Services poll this pointer on

a short interval (1–5s) and switch their read namespace on the next request after the

pointer changes. There is a brief window where some Query Service instances read v1 and

others read v2, acceptable because score changes are gradual, and results shift

incrementally rather than abruptly.

Note: Redis

RENAME takes exactly two keys, a single source and single destination. It

cannot operate on glob patterns. The pointer-key approach is the correct production

mechanism.

Streaming score pipeline (Kafka + Flink) L6 ›

Every submitted query is published as an event to a Kafka topic partitioned by hashed prefix. A stateful stream processing job (Flink) windows over 5-minute tumbling windows and emits per-prefix count increments. These increments are applied to the Trending Service's score store. Flink maintains checkpoints every 30 seconds so it can replay from the last checkpoint after a failure without losing count state. This design meets the <15-minute freshness NFR from §2 without touching the base trie.

Multi-region active-active and locale-specific tries L7/L8 ›

Each geographic region (US, EU, APAC) runs its own Redis trie cluster and Query Service fleet. The Trie Builder produces per-locale trie snapshots (en-US, fr-FR, ja-JP) and distributes them to the appropriate regions. A request from Paris routes to the EU region's fr-FR trie. For global popularity signals (e.g. "ChatGPT" trends everywhere), a global score overlay is merged at query time on top of the locale-specific base trie.

Failure modes & edge cases

| Scenario | Problem | Solution | Level |

|---|---|---|---|

| Redis trie unavailable | All origin reads fail; no suggestions served | Query Service falls back to static top-500 prefix list cached at CDN edge; returns partial results for unknown prefixes | L3/L4 |

| CDN cache poisoning | Incorrect results cached and served to millions of users before expiry | Cache key includes a checksum of the response; Query Service validates on cache write; CDN purge API triggered immediately on detection | L5 |

| Trie rebuild takes longer than expected | Old trie served for hours; freshness NFR violated | Trending Service carries the recency signal independently; it continues serving score deltas even while base trie is stale. Alert if rebuild exceeds 2× expected duration. | L5/L6 |

| Flink consumer lag spikes | Trending scores lag by more than 15 minutes; freshness SLA breached | Monitor consumer group lag; autoscale Flink task slots; add a dead-letter topic for malformed events to prevent stuck consumers | L6 |

| Offensive / spam terms appear in suggestions | Bad actors inflate query counts for harmful terms; suggestions surface inappropriate content | Block list applied at Query Service layer before returning results; anomaly detection on per-term count velocity to catch sudden artificial spikes; separate moderation pipeline reviews flagged terms | L5 |

| Hot shard (e.g. "a"-prefix shard) | A single Redis shard receives disproportionate load; p99 latency spikes | Sub-shard hot first characters; add read replicas to hot shards; monitor per-shard QPS and add replicas dynamically | L7 |

| Multi-region split-brain (network partition) | EU region continues serving stale trie while US region gets fresh updates | Each region is independent, stale results are acceptable per availability NFR. Recovery: Trie Builder pushes snapshots to all regions independently; partitioned regions self-heal on reconnect. | L7/L8 |

How to answer by level

The same system, described at different depths, distinguishes a junior engineer from a staff engineer. Here is what changes at each level.

L3 / L4 SDE I / SDE II ›

- Trie data structure with top-k stored at each node

- Simple LRU cache in front of trie lookups

- Basic query log → score computation pipeline

- Can explain time complexity: O(prefix length) lookup

- Understands why autocomplete needs sub-100ms response

- Understanding that a single trie host can't serve peak QPS

- Awareness of the freshness vs latency tradeoff

- Knowing where to use CDN vs Redis vs in-process cache

- Reasoning about the write path (score updates)

L5 Senior SDE ›

- Designs the CDN edge cache layer with correct invalidation reasoning

- Proposes trie sharding by prefix range

- Explains the batch (Spark) + streaming (Kafka/Flink) hybrid for score freshness

- Handles the failure modes table fluently (Redis down, shard hot)

- Client debounce reasoning tied to QPS numbers

- Zero-downtime trie swap mechanism

- Trending Service as a separate overlay (not inline trie update)

- Selective CDN invalidation vs full cache flush

- Cross-region replication strategy for tries

L6 Staff SDE ›

- Owns the end-to-end design including operational concerns

- Discusses locale-specific tries vs shared trie with locale filtering

- Reasons about Flink checkpointing, lag monitoring, autoscaling

- Proposes personalisation re-ranking layer and its integration point

- Connects all architectural decisions back to specific NFRs

- Active-active multi-region with failure isolation

- Cost model: memory vs shard count vs read replica count

- Experimentation framework for ranking weight changes

- Capacity planning beyond the interview numbers

L7 / L8 Principal / Distinguished ›

- Questions whether trie is the right structure vs a distributed prefix index

- Proposes an experimentation framework for A/B testing ranking signals

- Designs multi-region active-active with explicit failure isolation guarantees

- Discusses total cost of ownership: memory, CDN egress, Flink cluster cost

- Identifies make-vs-buy decision (Elasticsearch vs custom trie)

- Frames the build/buy tradeoff with concrete team and maintenance cost reasoning

- Proposes SLO definitions and runbook structure

- Discusses ML-based ranking and its infrastructure needs

- Connects architecture choices to business metrics (search engagement, CTR)

Classic probes, level-differentiated answers

| Probe | L3/L4 | L5/L6 | L7/L8 |

|---|---|---|---|

| "How would you make suggestions fresher?" | Re-build the trie more often | Streaming pipeline (Kafka + Flink) updating a trending overlay; base trie rebuilt hourly | Hybrid: streaming for trending delta + batch for global score; per-region pipelines with cross-region consistency tradeoffs |

| "How do you handle a sudden viral term?" | It'll appear after the next rebuild | Flink 5-minute window pushes score delta to Trending Service; selective CDN purge for the affected prefix | Count velocity anomaly detection triggers priority flush to Trending Service and CDN purge; PagerDuty alert on count spike > threshold |

| "Why not just use Elasticsearch for autocomplete?" | A trie serves prefix queries in microseconds from memory; Elasticsearch adds network hops and query parsing overhead for a workload that needs none of that flexibility | Elasticsearch prefix queries at scale require significant cluster resources; a trie trades operational simplicity for lower latency and cost on this specific access pattern | ES is a defensible choice for teams needing fuzzy matching, multilingual support, and don't want to own trie infrastructure. At Google-scale, neither is right, a custom distributed prefix index outperforms both. |

| "How do you personalise suggestions?" | Store user history and filter results | Re-ranking layer in Query Service: fetch global top-k from trie, then re-score using user's query history vector from a fast KV store; merge with global results | Two-tower model: global trie produces candidates; a personalisation model (served from a low-latency inference endpoint) re-ranks. Experimentation framework A/B tests ranking weights. User embedding stored in a feature store. |

Common follow-up questions

During the interview, the interviewer may pivot to probe your knowledge of edge cases and extensions.

Typo tolerance (Fuzzy matching) L5+ ›

If the user types "appel" instead of "apple", a pure trie returns nothing. The standard extension is a post-filter: the trie produces candidates for the longest valid prefix, then a BK-tree (a metric tree for edit distance) or a character n-gram inverted index re-ranks by Levenshtein distance. This is optional for a basic autocomplete system but interviewers frequently ask about it at L5+. Frame it as a separate service concern, the trie stays pure-prefix; the fuzzy layer normalises the query before it reaches the trie.

How is the score field actually computed? L5/L6 ›

The score column in query_score is a composite of three signals, computed by the Trie Builder's batch job:

1. Frequency component, raw query count within a retention window (typically 90 days), log-scaled to prevent extremely viral terms from completely drowning out steady popular terms:

freq_score = log(1 + count_90d)2. Recency component, an exponential decay applied to each daily count bucket, so that queries from last week outweigh queries from last month. Log-scaled for the same reason as the frequency component, raw decay sums can reach millions for popular terms:

recency_score = log(1 + Σ count_day_i × e^(−λ × days_ago_i))

// λ = 0.1 gives a half-life of ~7 days [ln(2)/0.1 ≈ 6.93]3. Final composite, both components are now on a comparable log scale (roughly 0–20 for realistic query volumes), so α is a meaningful weight:

score = α × freq_score + (1 − α) × recency_score

// α ≈ 0.6 weights steady popularity over pure recency

// Without the log on recency_score, raw decay sums would dwarf freq_score regardless of αDeduplication: A user submits the same query twice (double-tap). Won't that inflate the score? L5 ›

The Flink pipeline deduplicates by (session_id, query, 5-minute window) before aggregating counts. A repeated submission within the same window increments the count by at most 1. This requires Flink's keyed state to be partitioned by session_id, an important detail for the implementation.

Multi-word queries: If a user types "apple w", should autocomplete complete the last word, or the full phrase? L5/L6 ›

Two strategies:

(1) Last-token completion, split on space, run trie lookup on "w", prepend "apple " to all results. Fast, but ignores inter-word context.

(2) Full-phrase trie, index complete query strings (e.g. "apple watch", "apple store") as trie entries; "apple w" traverses to the "apple w" node and reads its top-k. Strategy 2 is more relevant but grows the trie with multi-gram entries. Common phrases are indexed explicitly; rare phrases fall back to last-token completion.

How the pieces connect

Every major design decision traces back to a requirement. These are the most important chains.